With the development of broadband network, IP-based video applications have received more and more attention. IP-based video applications must address four QoS issues such as throughput, transmission delay, time delay jitter, and bit error rate. However, due to most of the video applications use high compression rate encoding techniques, it is especially demanding for transmission errors, and the nature of the Internet is a network, which does not provide transmission of QoS guarantees, thus improving video application The anti-interference and recovery capabilities of the error have been research hotspots in the field of multimedia communication.

Video communication systems are typically composed of five parts: video source coding, multiplexing / package encapsulation / channel coding, channel transmission, demultiplexing / unpacking / channel decoding, and video decoding.

Error recovery in video communications is particularly important for the following reasons: (1) Since space and time prediction coding in the source encoder uses space and time prediction coding, compressed video streams are particularly sensitive to errors in transmission; (2) The video source and network environment are usually time-variable, so it is difficult to design a "optimized" solution based on certain statistical models, or even impossible; (3) The video source rate is usually very high. For some real-time applications, the codec cannot be too complicated.

Traditionally, the mechanism of the anti-misunderstand is divided into three categories: the redundancy is introduced into the encoder and channel coding, so that the encoded flow is more resistant to the transmission error; the decoder hides errors according to the result of the error detection; Interaction between encoders, transport channels, and decoders, the encoder adjusts its own operation based on the detected error information. Later, it is discussed for error recovery techniques.

Error recovery encoding error recovery encoding in the encoder is designed to use the smallest redundancy to get the maximum gain of error recovery. There are many ways to add redundant bits, and some are beneficial to prevent misunderstandings, and others are used to assist the decoder to better miscen more by error monitoring, and some goals are to ensure the basic level of video quality to ensure transmission The image quality does not deteriorate when the error is wrong. One main reason for encoding the transmission error is to use time prediction and space prediction. Once the error occurs, the frame reconstructed on the decoder will have a large difference with the original image, which causes an error when using this frame as a subsequent frame reconstruction of the reference frame.

One way to block the time domain error diffusion is a frame or macroblock encoded within the cycle. For real-time applications, the macroblocks encoded within an infrared frame are a valid error recovery tool. For the determination of the number and location of the intra encoded macroblock, the best way to know is the use of packet loss rate-based rate distortion optimization schemes. Another way to limit the error diffusion is to divide the data into multiple segments, only time and space prediction within the same paragraph, that is, an independent segment prediction. The layered coding (LC) refers to encoding a video into a basic layer and one or more reinforcing layers. The basic layer provides a lower but acceptable mass, and each additional reinforcement layer gradually increases quality.

Network adaptation layer design and new error recovery technology in H.264 H.264 is launched by the JVT consisting of MPEG and ITU. H.264 includes a VCL (video encoding layer) and NAL (network abstraction layer). The VCL includes a core compression engine and a grammar level definition of the block / macroblock / piece, which is designed to be independent of the network. NAL adapts the bit string generated by the VCL into a variety of networks and multivariate environments, which covers the syntax levels above all the levels and contains the following mechanisms: the parameters required to decode each piece. Data; prevented start code conflicts; support for additional enhancement information (SEI); implementation framework for transmitting the encoder's bit string on a bitstream network.

There are two main purposes separated by NAL and VCL. First, a VCL signal processing and NAL transmission interface is recommended, which allows VCL and NAL to work on a completely different processor platform. Second, VCL and NAL are designed to be adapted to the heterogeneous transmission environment, and the gateway does not need to reconstruct and reconstruct the VCL bitstream because the network environment is different. Since the network protocol hierarchical hierarchy used in the IP network environment is RTP / UDP / IP, so it is based primarily based on this transmission framework. Next, the three error recovery tools in H.264 are first analyzed, and then the basic processing unit NALU of the NAL layer and its RTP package, polymerization, and splitting method are analyzed.

The error recovery tool H.264 includes a large number of error recovery tools, in which the interpolation, RPS and data segmentation of intra code mode are applied to the previous video compression scheme, so only the H. 264 For their improvements, some tools are new or implemented in an innovative approach, such as parameter set, flexible macroblock sort (FMO), redundant fragmentation (RS), which will be analyzed in detail below. H.264 Improvements in intra-intra-interpolation mode mainly reflect, when used, which level, how much is used, where to use the problem, etc. The H.264 method using RDO (rate distortion optimization) determines that the above elements are verified by experiment, which has achieved good results.

For RPS, H.264 can be selected wider, and the forward or later reference frame can be used, and the number can be up to 15 frames. In H.264, three different types of data segmentation: (1) frame header information, including macroblock types, quantization parameters, and motion vectors, this segmentation type is most important, called A-type segmentation; (2) frame Internal segmentation, also known as the B-type segmentation, including intra-code block mode and intra-inflated, which requires a given fragment a type a-type segmentation, relative to interframe information, and intra information can better block drift. Effect, therefore it is more important than interframe division; (3) inter-frame segmentation, ie, C-type segmentation, which includes inter-frame encoding block mode and inter-inter-inter-frame coefficient, in general it is the maximum partition, frame of the fragmentation. The inter-segmentation is the least important, which also requires the a-type segmentation of a given fragment.

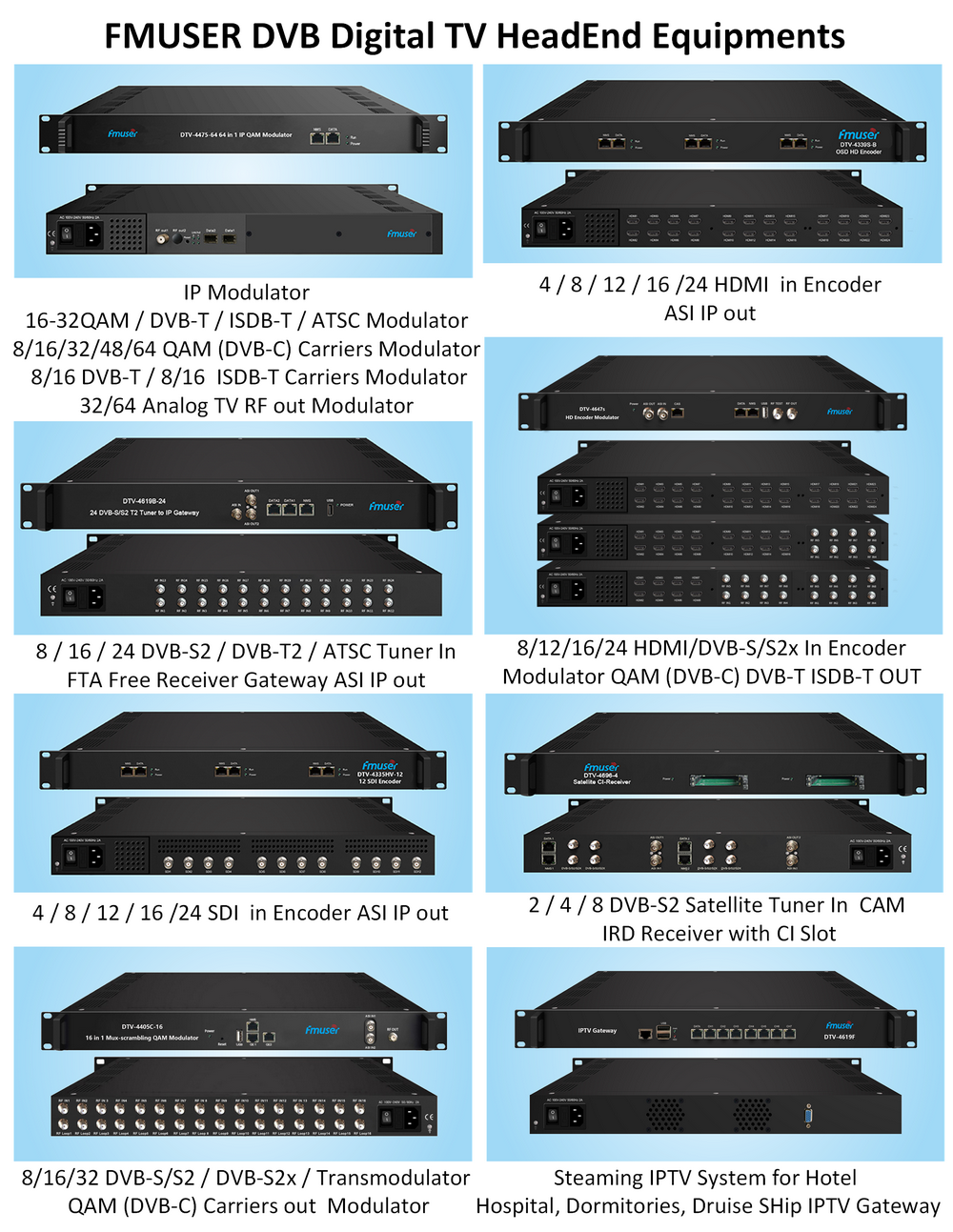

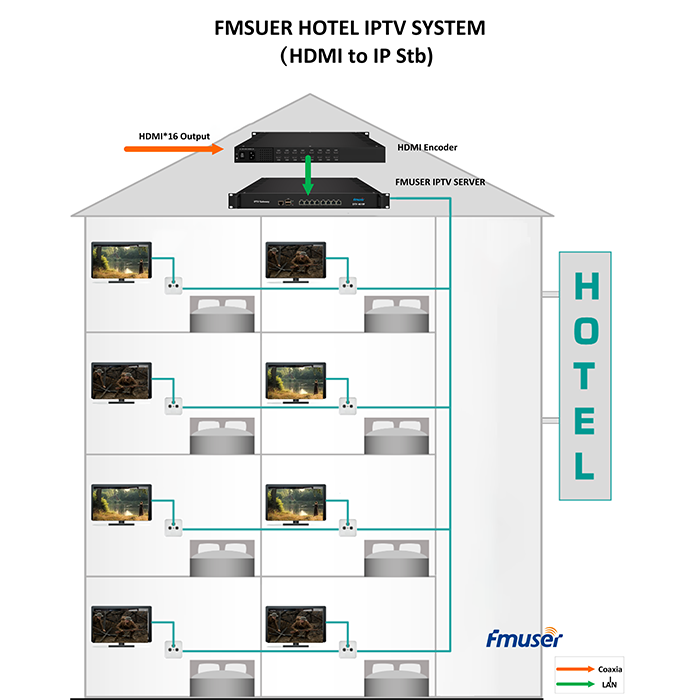

Our other product: