Lagrangian multiplier law is undoubtedly the most important way in the optimization theory. But now there is no good introduction to the article of the entire method. Therefore, Xiaobian organizes the following article, I hope to win everyone.

When obtaining an optimization problem with constrained conditions, the Lagrange Multiplier and KKT conditions are very important for two cases. For the optimization of equation, Lagranda will be applied. The sub-method is going to obtain the optimal value; if it contains inequality constraints, KKT conditions can be used to obtain. Of course, the results of these two methods are only necessary, and only if they are convex functions, it can guarantee that it is full necessary.

The KKT condition is the generalization of the Lagrangian multiplier method. When you study it before, I only know that two methods are applied directly, but I don't know why Lagrange Multiplier and KKT conditions can work, why do you want to see the best value? This article will first describe what Lagrange Multiplier and KKT conditions; then start talking about why you want to seek the optimal value.

I. Lagrange Multiplier and KKT conditions usually we need to solve the optimization problem of the following categories

: (I) No constraint optimization problem, can be written as: min f (x); (ii) Optimization of equation constraints, can be written as: min f (x), st h_i (x) = 0; i = 1, ..., n (iii) Optimization of inequality constraints can be written as: min f (x), ST g_i (x) < = 0; i = 1, ..., nh_j (x) = 0; J = 1, ..., M for the optimization of the (i) class, the method often used is the FERMAT theorem, that is, if the deflection of F (X) is used, then it is zero, it can be obtained The optimal value of the candidate, then verifies in these candidate; if it is a convex function, it can be guaranteed to be the optimal solution. For optimization issues (II) class, the method often used is the Lagrange Multiplier, that is, the equation H_i (X) is written with F (X) as a formon, It is called the Lagrangian function, and the coefficient is called Lagrangian multiplier. Through the Lagrangian function, the variables are given to each variable, which makes it zero, and the candidate value can be obtained, and then verify the optimal value.

For optimization issues for class (iii), the method often used is KKT conditions. Similarly, we write all the equations, inequality constraints with f (x) as a style, also called Lagrangian function, coefficient, also known as Lagrangian multiplier, through some conditions, you can find the best The necessary conditions of the value, this condition is called KKT conditions.

(a) Lagrange Multiplier is constrained for equation, we can combine equation L (A, X) = f through a Lagrangian Ceramic Cener A. x) + a * h (x), here the A and H (X) are treated as a vector form, and a is a horizontal vector, h (x) is column vector, which is written, it is complete because CSDN is difficult to write mathematical formulas. , Only will be ... The optimal value can then be obtained, and can obtain zero, connecting to the various parameters, connecting to the L (A, X), this is in the higher math, but not why do you do this, Next, the idea will be briefly introduced. (b) How does KKT conditions have an optimal value for optimization of inequality constraints? Common methods are KKT conditions, in the same manner, all inequality constraints, equation constraints, and target functions are all written to one formula L (A, B, X) = f (x) + a * g (x) + B * h (x), KKT condition is that the optimal value must meet the following conditions:

1. L (A, B, X) for X, to zero; 2. H (x) = 0; 3. A * g (x) = 0; to obtain the most candidate after obtaining these three equations Excellent value. The third form is very interesting because g (x) < = 0, if this equation is to be met, a = 0 or g (x) = 0. This is the source of many important nature of SVM, such as Support vector concept.

II. Why is the Lagrange Multiplier and KKT conditions get an optimal value?

Why do you want to get the best value? Let me talk about the Lagrangian multiplier method. Imagine that our target function z = f (x), X is vector, Z Different values, equivalent to the plane (surface) that can be projected on X (surface), that is, it is a contour Line, as shown below, the target function is f (x, y), where x is scalar, the dashed line is a contour line, now suppose our constraint G (X) = 0, X is vector, on the plane or surface of the X It is a curve, assuming that G (x) is intersecting the contour line, the intersection is the value of the feasible domain of the equation constraints and the target function, but it is definitely not the best value, because the intersection means that there is certain other than other contours. The line is internally or outside of the contour line of the strip, so that the value of the new contour and the target function is more or smaller, only when the contour is too cut to the curve of the target function, it may be optimal Value, as shown in the figure below, the curve of the equal line and the target function must have the same direction in this point, so the optimal value must satisfy: f (x) gradient = a * g (x) gradient, A is a constant, indicating the same direction on the left and right. This equation is the result of L (a, X) for parameters.

The KKT condition is the necessary conditions for satisfying the optimization of strong dual conditions, which can be understood: We ask MIN F (X), L (A, B, X) = F (x) + a * g (x) + b * H (x), a> = 0, we can write F (x) as: max_{a, b} L (A, B, X), why? Because H (x) = 0, g (x). 1881. = 0, now is the maximum value of L (A, B, X), A * g (x) is. 1881. = 0, so L (a B, X) can only achieve maximum in the case of A * g (x) = 0, otherwise, the constraint condition is not satisfied, so MAX_{A, B} L (A, B, X) are satisfying the constraint condition In the case of f (x), so our target function can be written as Min_x Max_{A, B} L (A, B, X). If you use a dual expression: max_{a, b} min_x l (a, b, x), because our optimization is satisfying a strong duality (strong duality is said that the optimal value of the doll is equal to the best of the original problem Value), so it meets F (X0) = max_{a, b} min_x {a, b = min_x max_{a, b = min_x max_{a, b = min_x max_{a, b = min_x max_{a, b = min_x max_{a, b} L (a, b X) = f (x0), let's take a look at what happened in the middle of the middle:

f (x0) = max_{a, b} min_x L (a, b, x) = max_{a, b} min_x f (x) + a * g (x) + b * h (x) = max_{a , B} f (x0) + a * g (x0) + b * h (x0) = f (x0)

It can be seen that the above-mentioned black-added place is in nature, MIN_XF (X) + A * G (X) + B * H (x) has a minimum of X0, with the Fermat theorem, ie, for function f (x) + a * g (x) + b * h (x), the detergent is equal to zero, that is, the gradient + b * h (x) gradient + b * h (x) gradient + B * h (x) gradient = 0

This is the first condition in KKT conditions: L (A, B, X) to zero to x.

Moreover, A * g (x) = 0, at this time, the third condition of KKT conditions, of course, known conditional H (x) = 0 must be met, all of the above description, meet the optimization of strong dual conditions The optimal value must meet the KKT conditions, that is, three conditions described above. KKT conditions can be regarded as the generalization of the Lagrangian multiplier method. , Read the full article, original title: Machine study foundation in-depth understanding of Laglan

Article Source: [Micro Signal: Weixin21ic, WeChat public number: 21IC electronic network] Welcome to add attention! Please indicate the source of the article.

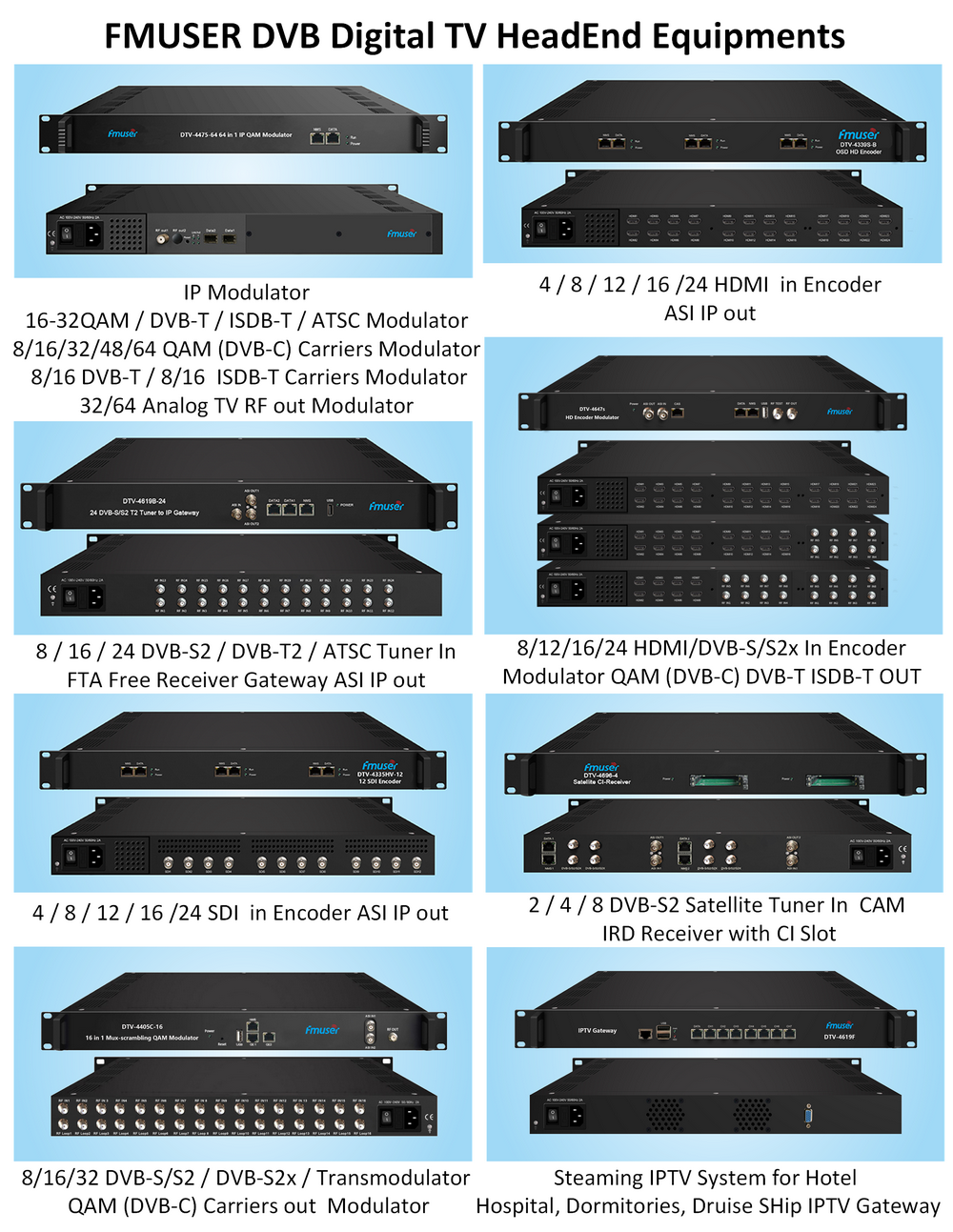

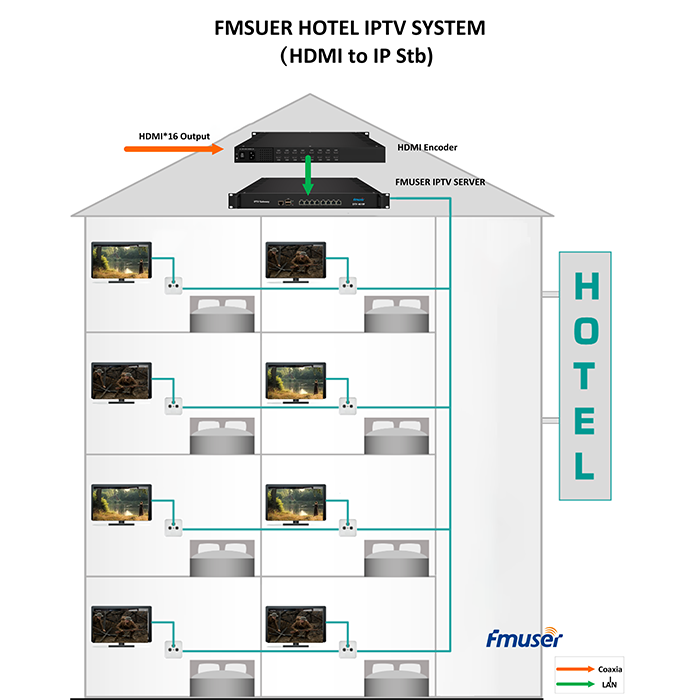

Our other product: