Deep learning techniques show huge advantages for reducing the error rate of computer visual identification and classification. The depth neural network is implemented in the embedded system helps the machine through visual interpretation of the face expression and reach the accuracy of similar humans.

Identifying facial expressions and emotions are a basic and very important skill in the early stage of human society interaction. Humans can observe a person's face and quickly identify common emotions: anger, hi, shock, hate, sadness, fear. Communicate this skill to the machine is a complex task. After decades of engineering design, the researchers try to write computer programs that can accurately identify one feature, but must still be repeatedly attempted to identify only the characteristics of only fine differences.

Then, if you don't program the machine, but directly teach the machine to accurately identify emotions, is this possible?

Deep Learning Technique exhibits a huge advantage for the reduction of Computer Vision recognition and classification. The depth neural network is implemented in an embedded system (see Figure 1) that it helps to interpret the face expression through visual interpretation and achieve the accuracy of similar humans.

Figure 1: Simple example of depth neural network

Neural networks can be identified by training, and if it has an input / output layer and at least one hidden intermediate layer, it is considered to have "depth" recognition capabilities. Each node is calculated from the weighting input value of multiple nodes in the previous layer. These weighted values can be adjusted to perform special image recognition tasks. This is called a neural network training process.

For example, in order to train the depth neural network to identify photos of a happy smile, we show the original data (image pixels) on the input layer. Since the result is happy, the network identifies the mode in the picture and adjusts the node weight, and reduces the mistakes of happy class pictures as much as possible. Each new picture that shows a happy expression and comes with annotation helps optimize image weight. With the intensive input information and training, the network can intake without marked pictures, and accurately analyze and identify patterns corresponding to the happy expression.

The depth neural network requires a lot of calculation capabilities for calculating the weighted value of all of these interconnect nodes. In addition, data memory and efficient data move are also important. Convolutional Neural Networks (see Figure 2) is currently for advanced technologies with the highest efficiency in deep neural networks for visual implementation. The reason why CNN is more efficient, because these networks can reuse a large amount of weight data between pictures. They use the two-dimensional (2D) input structure of data to reduce repetition operations.

* Figure 2: Convolutional Neural Network Architecture for Face Analysis (Schematic) *

The implementation of the CNN for face analysis requires two unique and independent stages. The first is the training phase, the second is the deployment phase.

Training phase (see Figure 3) requires a depth learning architecture - such as caffe or tensorflow - it uses a central processor (CPU) and a plot processor (GPU) training calculation and provides architectural knowledge. These architectures typically provide an example of CNN graphics that can be used as a starting point. The depth learning architecture can fine-tune the graphics. In order to achieve as best accuracy, it can be increased, removed or modified.

Figure 3: CNN training stage

One of the biggest challenges in the training stage is to find the correct data set to conduct a network to train the network. The accuracy of the depth network is highly dependent on the distribution and quality of training data. Multiple options that must be considered by face analysis are from the Emotional Number of Emotional Data Sets and multi-labeled private data sets from Vicarvision (VV).

For real-time embedded design, the deployment phase (see Figure 4) can be implemented on an embedded visual processor, such as a Synopsys DesignWare EV6X embedded visual processor with programmable CNN engine. Embedded visual processors are the best choice for equalization performance and small area and lower power relationships.

Figure 4: CNN deployment phase

Although the scalar unit and the vector unit are programmed by C and OpenCL C (for implementation of vectorization), the CNN engine does not have to manually program. The final graphics and weights (coefficients) from the training phase can be transferred to the CNN mapping tool, while the CNN engine of the embedded visual processor can be used to perform face analysis at any time via configuration.

The video or video screen captured from the camera and the image sensor is fed to the embedded visual processor. In the identification scenario in lighting conditions or face posture, CNN is more difficult to handle, and therefore, the pretreatment of the image can make the face more uniform. The heterogeneous architecture and CNN of the advanced embedded visual processor allow the CNN engine to classify the image, and the vector unit will

An image is preprocessing - light correction, image scaling, flat rotation, etc., and scalar unit processing decision (ie how to handle CNN detection results).

Image resolution, picture update rate, number of layers, and expected accuracy should consider the desired parallel multiply and performance requirements. Synopsys EV6X embedded visual processor with CNN uses 28nm process technology, performed at a rate of 800MHz while providing up to 880mAC.

Once CNN has the ability to detect emotions, it can make it easier to reconfigure, and then process face analysis tasks, such as determining age range, identifying gender or race, and resolving hairstyle or whether it is in glasses.

Summarize

CNNs that can be performed on an embedded visual processor open a new field of visual processing. Soon, we will be full of electronic products that can interpret emotions, such as to detect a happy emotions, and electronic teachers who can identify students' understanding via identifying facial expressions. Deep learning, embedded visual treatment and high performance CNN will soon make this views a reality.

Original link: https://www.eeboard.com/news/analysis/

Search for the panel network, pay attention, daily update development board, intelligent hardware, open source hardware, activity and other information can make you master. Recommended attention!

[WeChat scanning picture can be directly paid]

Technology early know:

Shoot a detector to the sun, this is not a joke!

Real black technology, brain microscope: Close your eyes, you can see the surroundings

Core, expensive, Intel New 12-core processor i9-7920X what and AMD are 飚?

"Mechanism" artifact: portable DIY black rubber record machine

How to use the RENESAS SYNERGY S3A7 Internet of Things fast prototype development kit accelerate the network development process

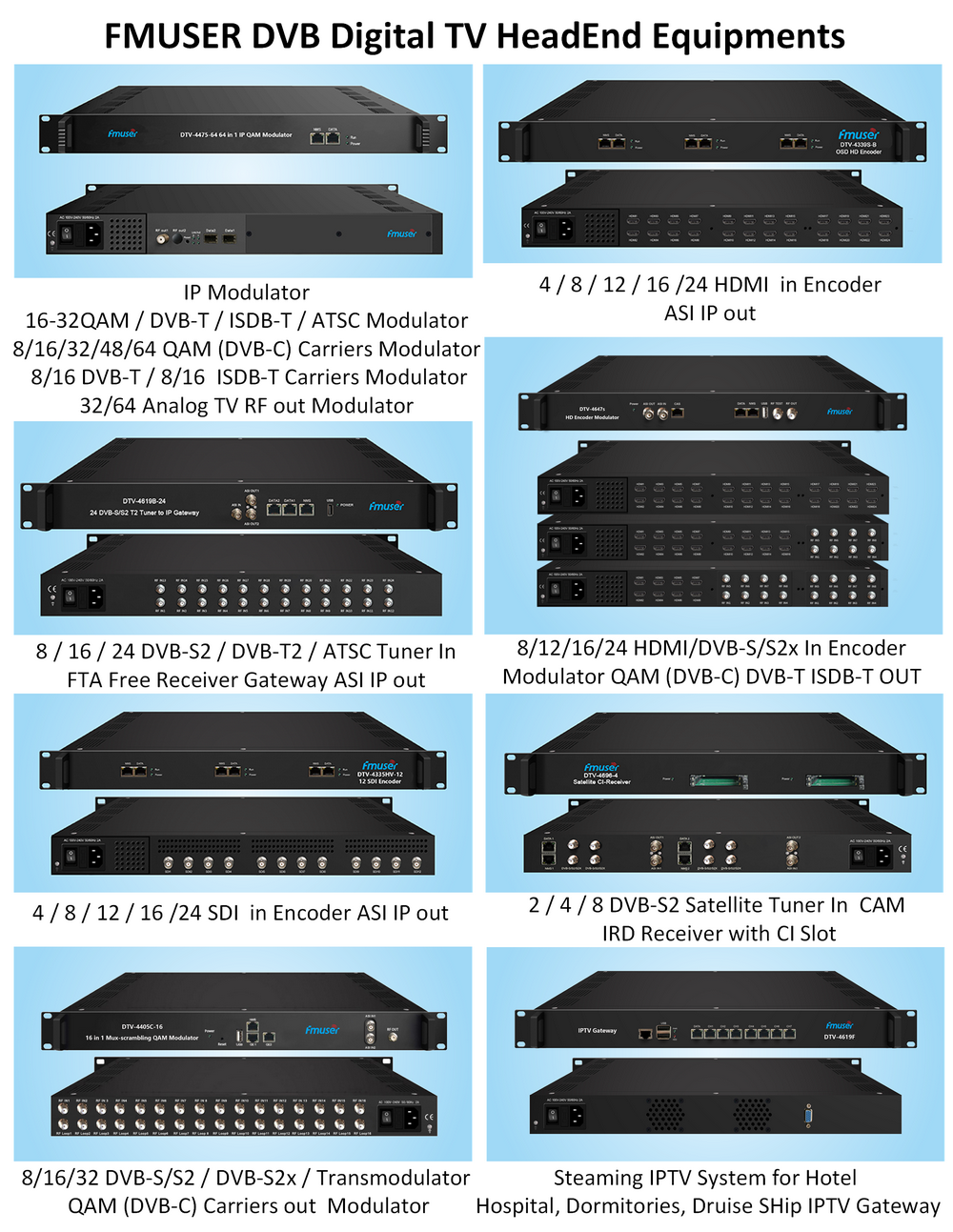

Our other product: